Portfolio

4

Artificial Intelligence (AI) has rapidly transformed many creative and technical industries, and 3D creation is one of the fields experiencing the biggest revolution. Traditionally, creating 3D models required highly skilled artists using complex software such as Blender, Maya, or 3ds Max. These workflows involved manual polygon modeling, sculpting, UV mapping, texturing, lighting, and rendering—often taking hours or days to complete a single asset.

Today, AI can generate 3D models from text, images, sketches, or videos, dramatically accelerating production pipelines in industries such as:

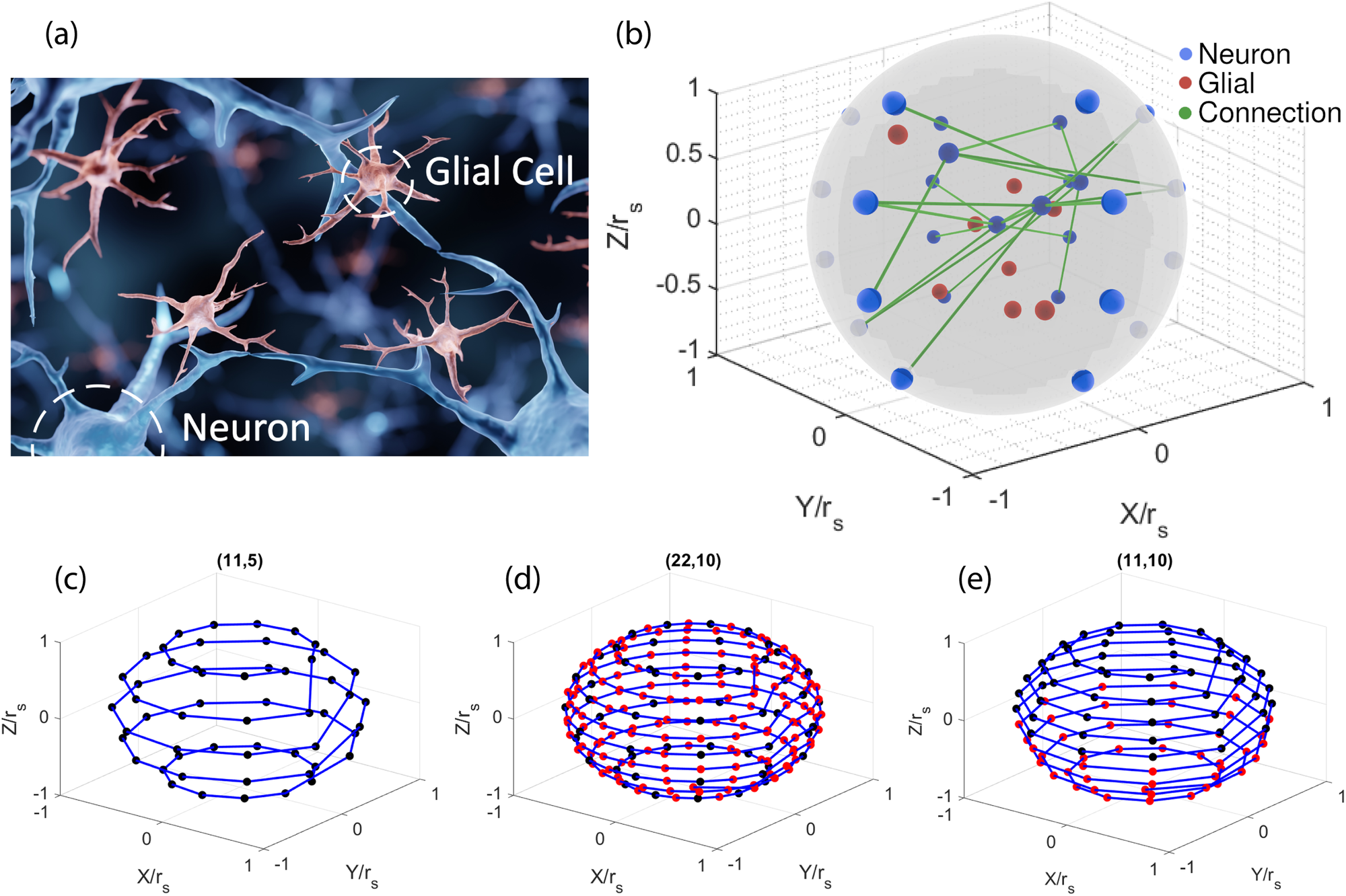

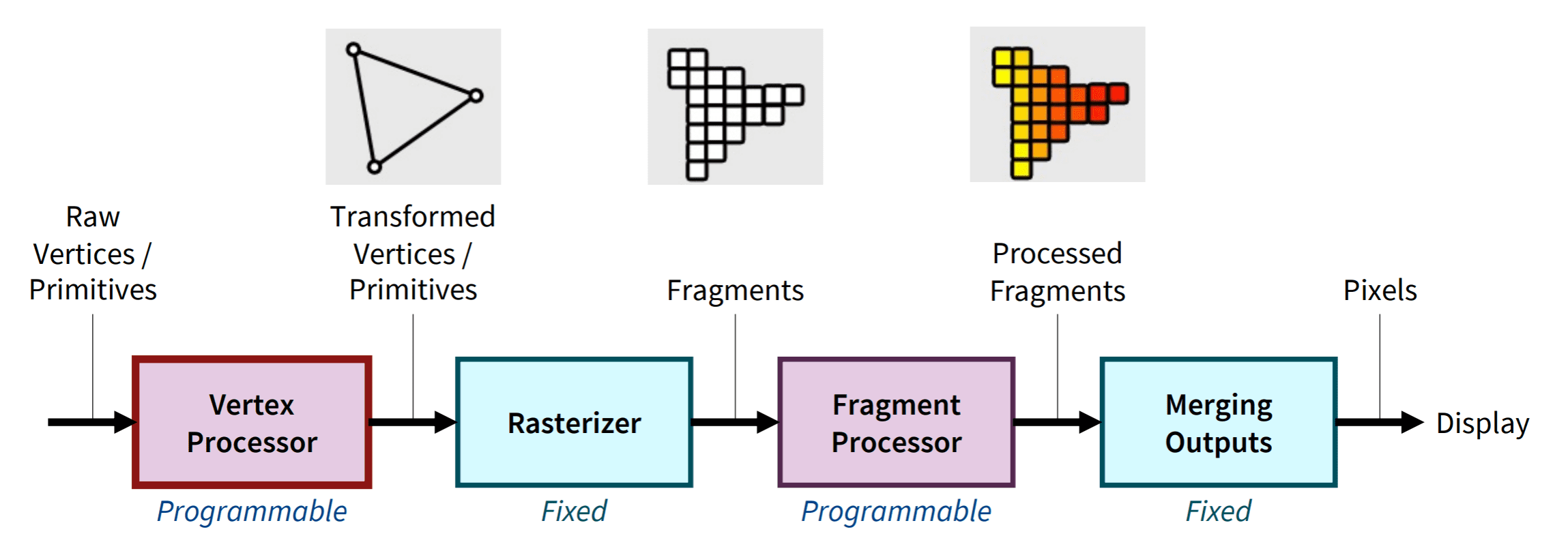

AI-powered tools use machine learning and deep neural networks to analyze large datasets of 3D objects and learn how shapes, textures, and structures are formed. Once trained, these systems can automatically generate new models based on user input.

Generative AI is capable of producing new digital content—including images, videos, and 3D models—by learning patterns from training data.

This article explains how AI covers the entire 3D workflow step-by-step, from understanding input to generating finished assets.

4

The first stage of AI-based 3D generation is input interpretation.

AI systems typically accept three types of input:

Users describe the object they want.

Example:

“A futuristic flying car with neon lights”

The AI interprets the prompt using Natural Language Processing (NLP) and converts it into a structured representation of shapes, materials, and proportions.

Users upload an image or reference photo.

AI analyzes:

Then reconstructs a 3D representation from the 2D image.

Modern systems can convert a single photograph into a 3D object by estimating depth and structure.

AI can also generate models from simple sketches. For example, research systems can convert a 2D drawing into a 3D CAD object by automatically performing design actions similar to an engineer using CAD software.

4

AI cannot generate 3D models without training data.

Training involves feeding the AI system with thousands or millions of examples:

The neural network learns patterns such as:

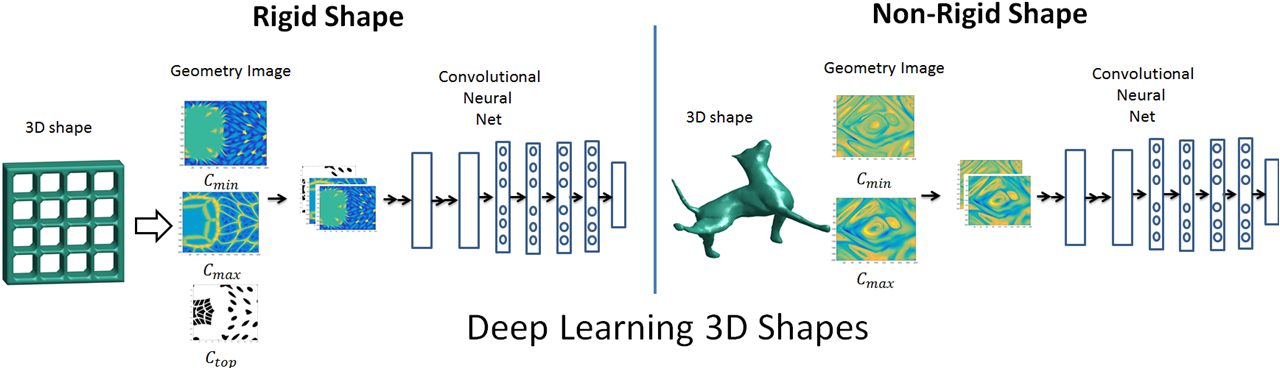

Common machine learning architectures used include:

These models analyze existing 3D data and learn to create new shapes based on learned features.

The process is similar to how image generators like Midjourney or DALL-E learn from large image datasets.

4

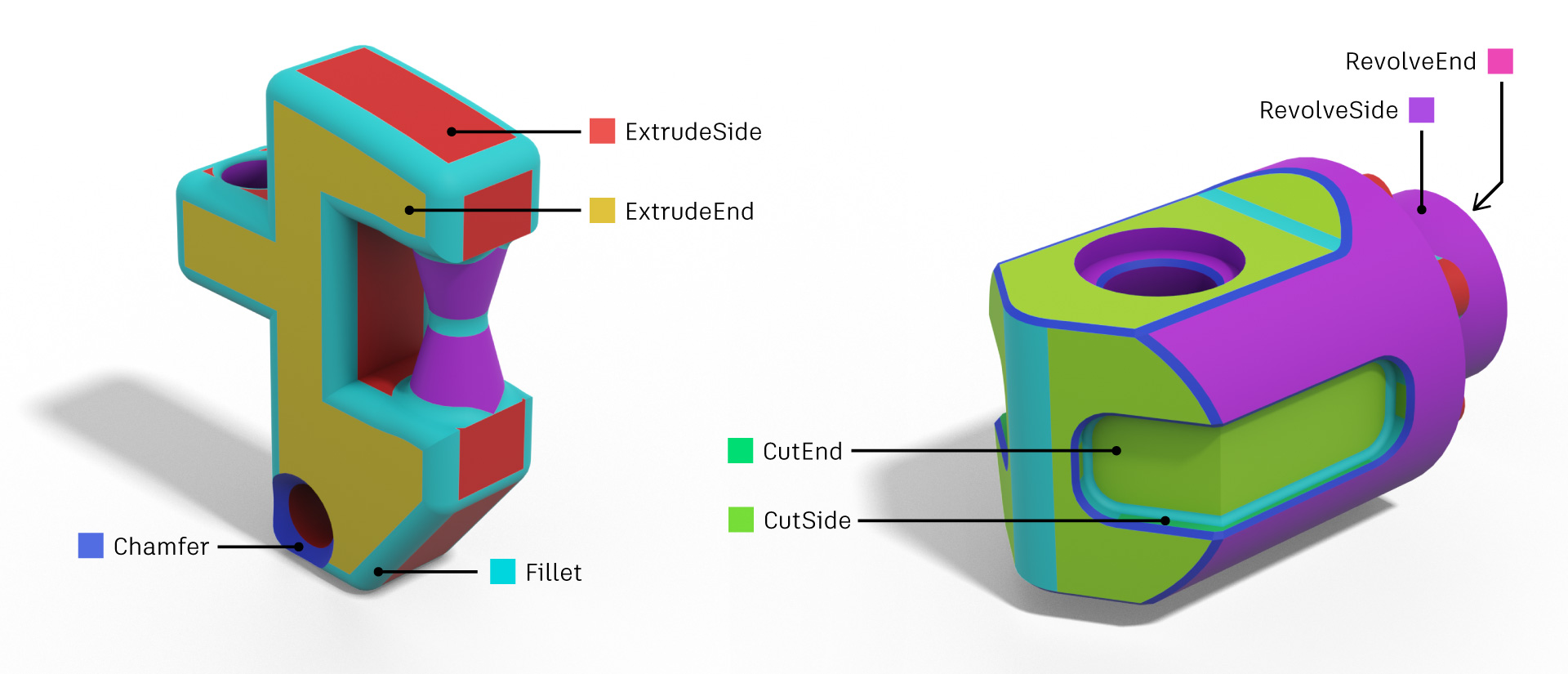

Once the AI understands the input prompt and has learned from datasets, it begins generating geometry.

This stage defines the structure of the object.

There are several techniques used:

The AI generates polygon meshes consisting of:

These polygons form the surface of the object.

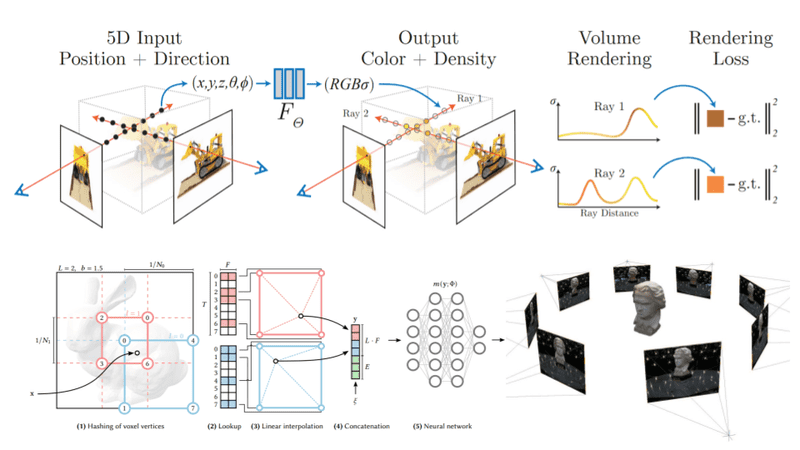

NeRF technology generates a 3D scene by learning how light behaves across multiple images.

Using several photographs of an object, NeRF reconstructs a realistic 3D representation.

Diffusion models gradually transform random noise into structured 3D shapes guided by text or images.

Recent research integrates diffusion with neural fields to generate realistic models.

These methods enable AI to build a complete 3D structure automatically.

4

After geometry is created, the next step is texturing.

Texturing adds:

AI automatically generates:

This step is essential for realism.

Traditional workflows required artists to paint textures manually. AI now automates this process.

AI can also generate multiple design variations automatically, enabling designers to explore different styles rapidly.

4

AI-generated models must be optimized for real-time applications like games or VR.

Optimization includes:

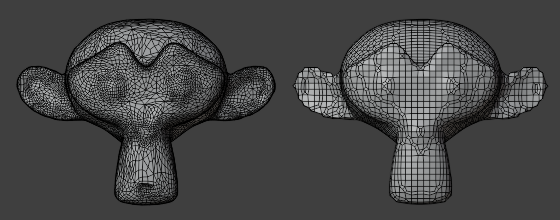

Converting messy geometry into a clean polygon structure.

Reducing polygon count for better performance.

Unwrapping the model so textures can be applied correctly.

AI tools now automate many of these tasks, which previously required manual work by artists.

4

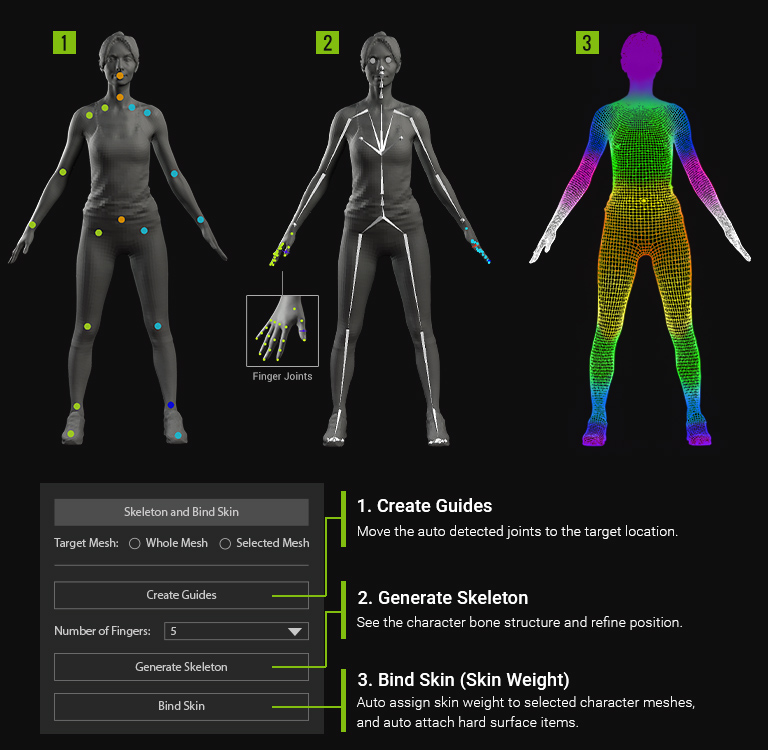

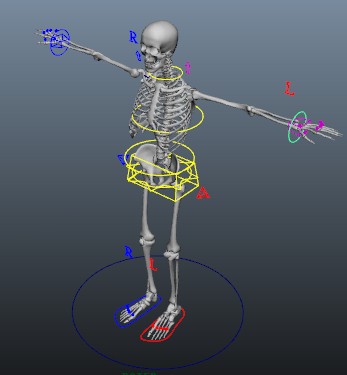

For characters and animated objects, AI also assists with rigging and animation.

AI systems can:

This drastically reduces animation production time.

4

Once the model is complete, the scene must be rendered.

AI enhances rendering through:

AI also helps simulate:

These technologies make digital scenes far more realistic.

4

AI-driven 3D creation is transforming many industries.

AI can generate characters, environments, and props quickly.

Studios can create complex scenes faster.

AI can generate building prototypes and visualizations.

AI-generated designs are used in product development.

Robots use AI to understand 3D environments.

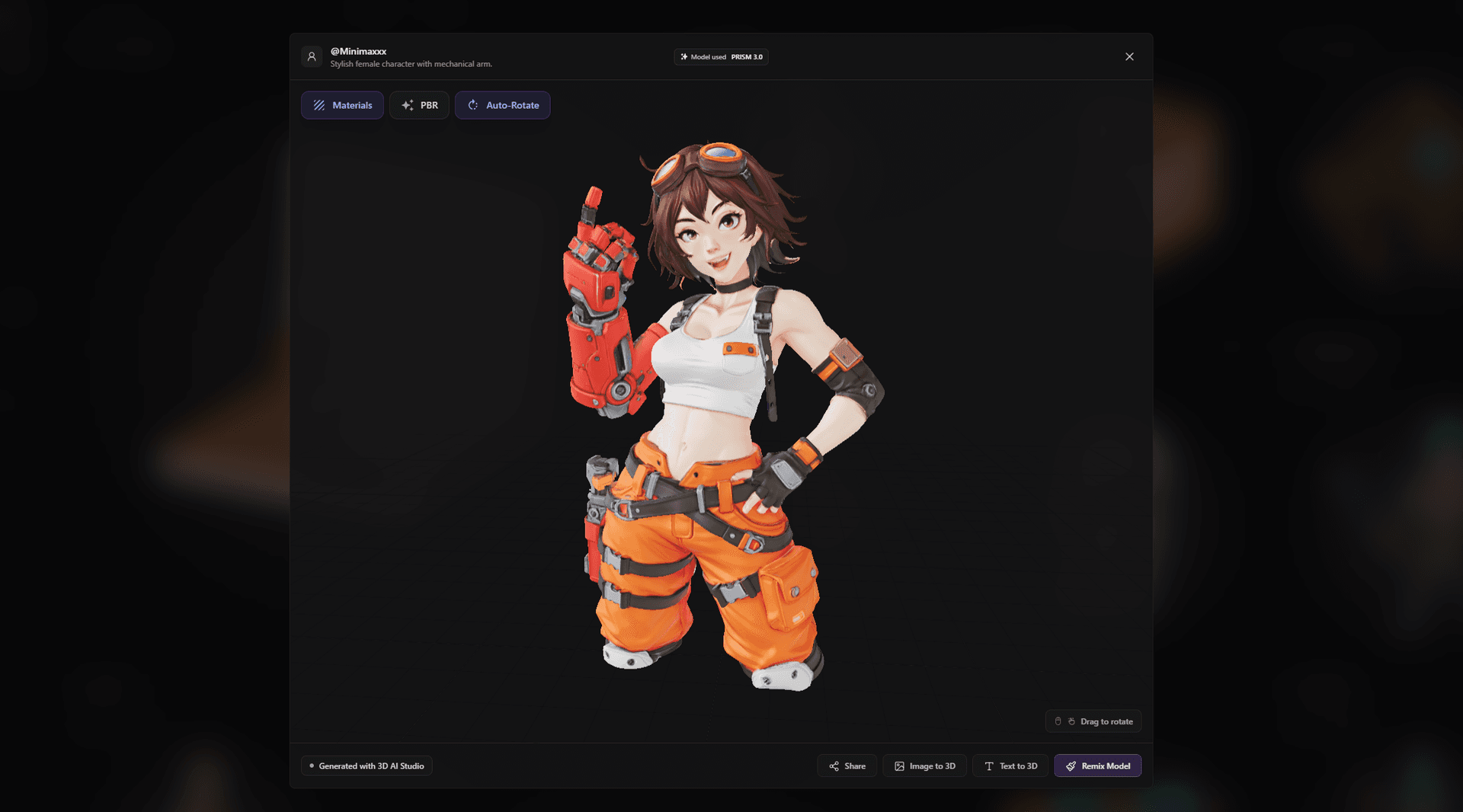

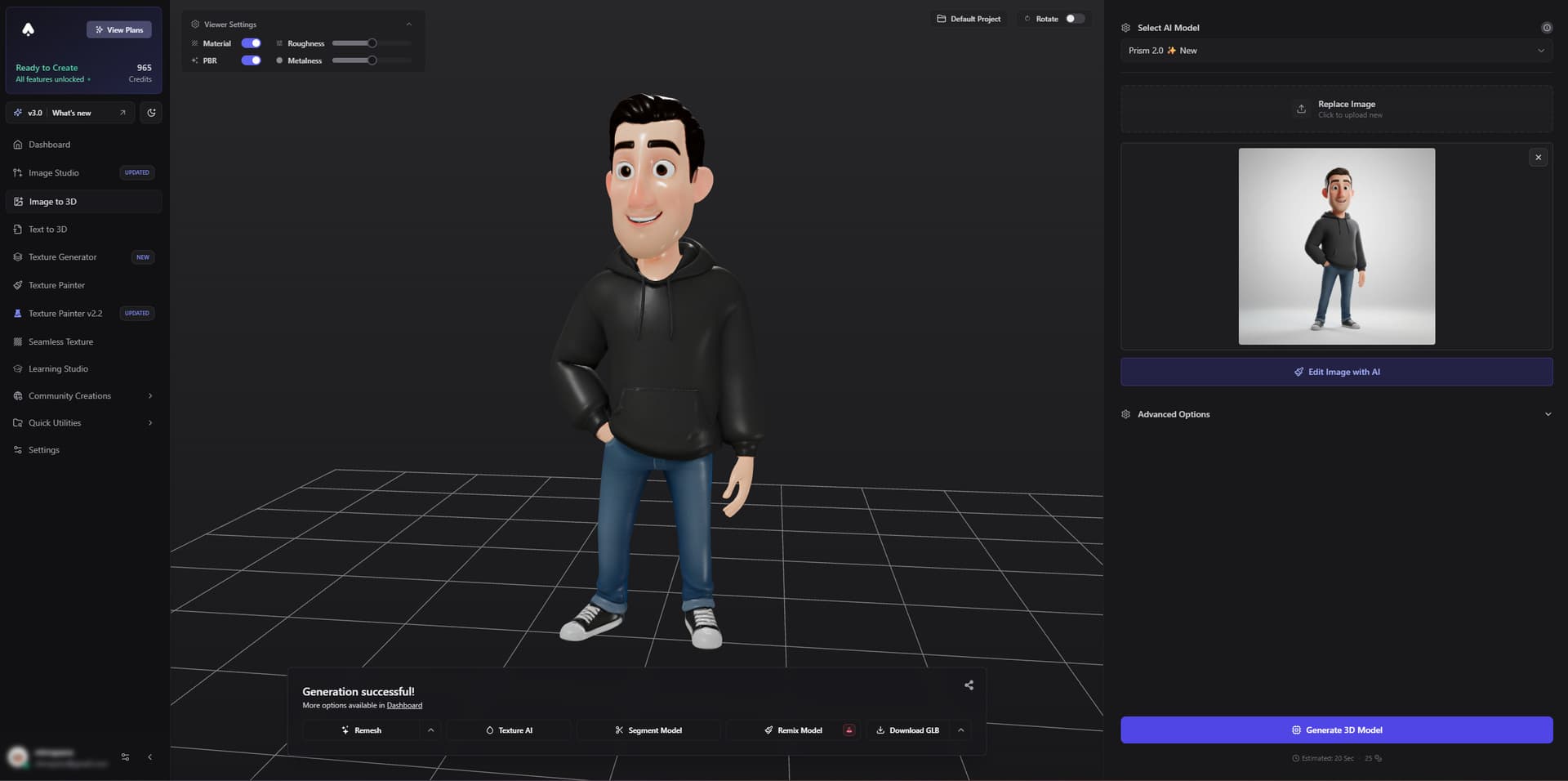

Below is a demonstration of text-to-3D generation workflow.

This video demonstrates how a simple text prompt can generate a 3D object automatically.

This technique allows users to upload photos and generate full 3D assets.

The global generative-AI 3D asset market is expected to grow significantly in the coming years as industries adopt these tools.

Despite its advantages, AI-generated 3D still faces challenges.

Some models lack precise geometry.

AI requires large training datasets.

Training datasets may include copyrighted models.

Human artists are still needed to refine results.

Recent developments show that AI will soon generate entire interactive 3D worlds from text prompts.

Some experimental systems can already build playable environments instantly from text instructions.

Major tech companies are also developing models that convert single photos into accurate 3D reconstructions in real time.

In the future, AI may enable:

Artificial Intelligence is fundamentally transforming the 3D creation pipeline. What once required teams of expert modelers and animators can now be partially automated using generative AI technologies.

The AI-driven 3D workflow typically involves:

Through techniques such as diffusion models, neural radiance fields, and deep learning, AI systems can generate realistic 3D assets quickly and efficiently.

Although the technology still requires human supervision, it has already reshaped industries such as gaming, film, architecture, robotics, and manufacturing. As research advances, AI will likely move beyond creating individual models and begin generating complete immersive virtual worlds.

The future of digital creation is increasingly AI-assisted, automated, and interactive, opening new opportunities for artists, engineers, and developers worldwide.